My site prodfeat.ai was running on React SPA. Vite 7, React 19, fast builds, looks great. One problem - search engines saw a blank page, because all rendering happened on the client. SEO score: 6.5 out of 10. For a content site with a blog, that’s a death sentence.

I knew nothing about SEO. Zero. I didn’t even know what E-E-A-T was until the agents told me I scored 4 out of 10.

2 sessions in Claude Code - migrated everything to Astro 5 SSG. 13 tasks. SEO jumped to 8.7.

How I got to migration

React SPA renders content on the client. Google sees an empty <div id="root">. You can bolt on SSR, but that means a separate server, config, and cost - I chose Astro because static hosting on Vercel is free, and Next.js SSG would’ve meant rewriting routing from scratch. Astro generates ready HTML at build time and has a Content Layer API for Markdown-based blogs.

But I didn’t start with code.

Recon: OpenClaw + Claude Code

First I pointed my bot OpenClaw at the site. Asked it to analyze everything visible and generate reports. OpenClaw produced a list of issues - from missing meta tags to language chaos (English UI, Russian content). I brought those reports into Claude Code.

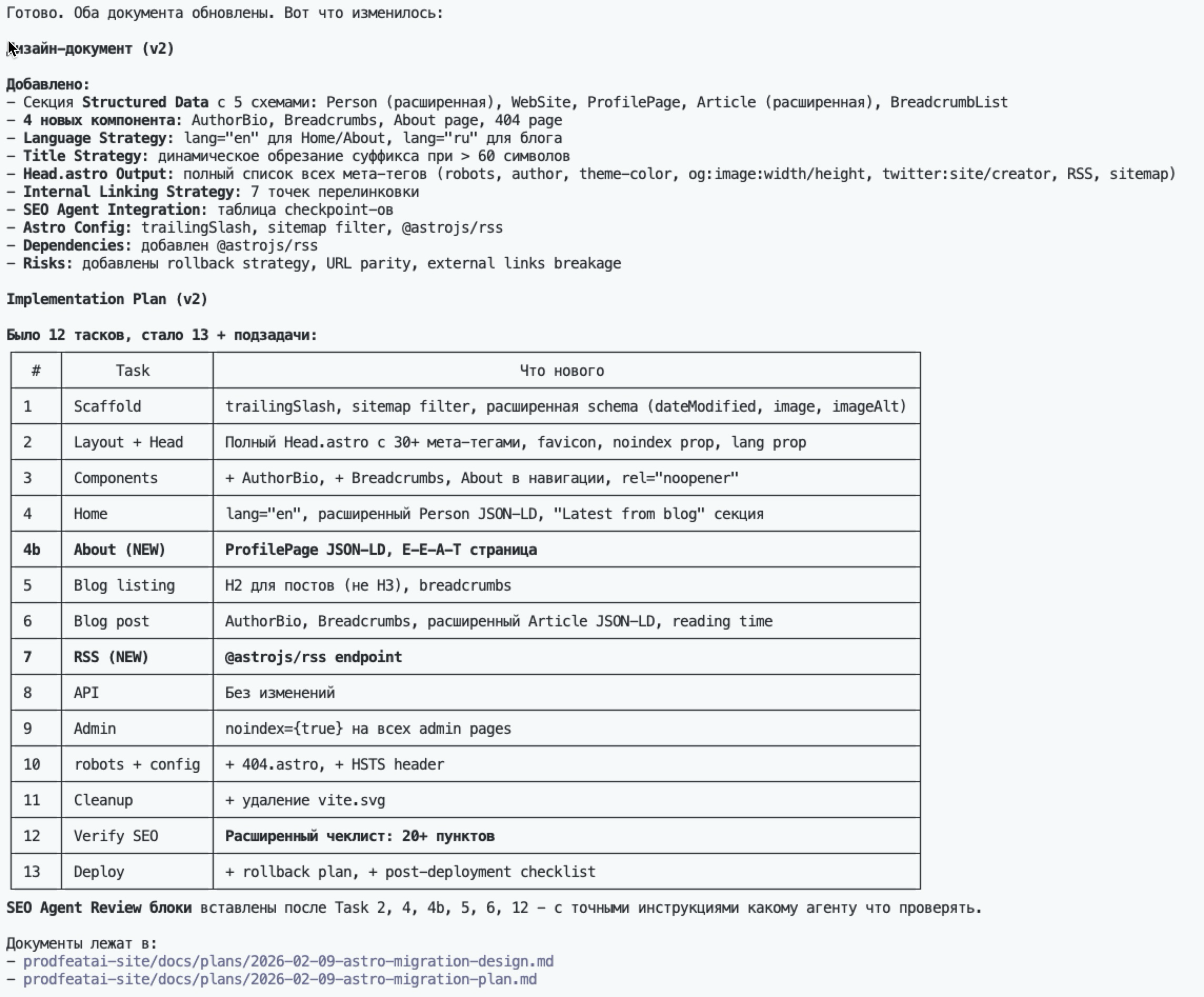

Claude Code used Superpowers skills to generate a design document: what to migrate, in what order, which SEO checkpoints to set. The result: a plan with 13 tasks.

But a plan isn’t the same as expertise. I needed someone who actually knows SEO.

10 SEO agents from GitHub

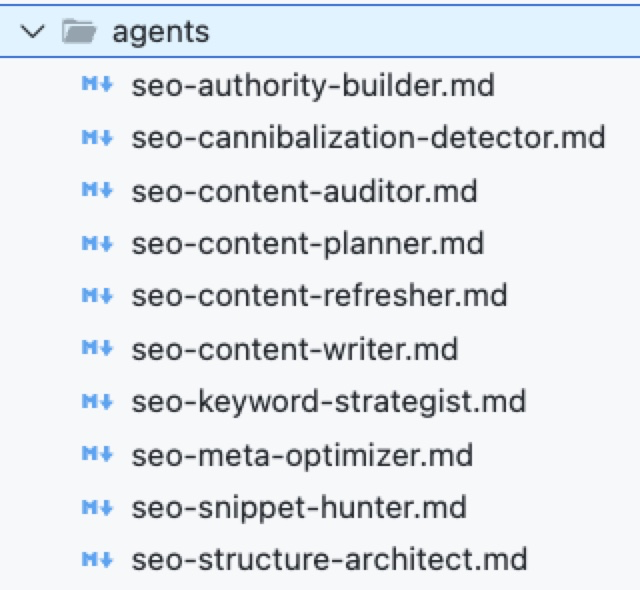

Found a GitHub link - a set of 10 specialized SEO agents for Claude Code. Each one a separate subagent with its own focus: seo-structure-architect, seo-meta-optimizer, seo-content-auditor, and 7 more. Integrated them into the project. Done.

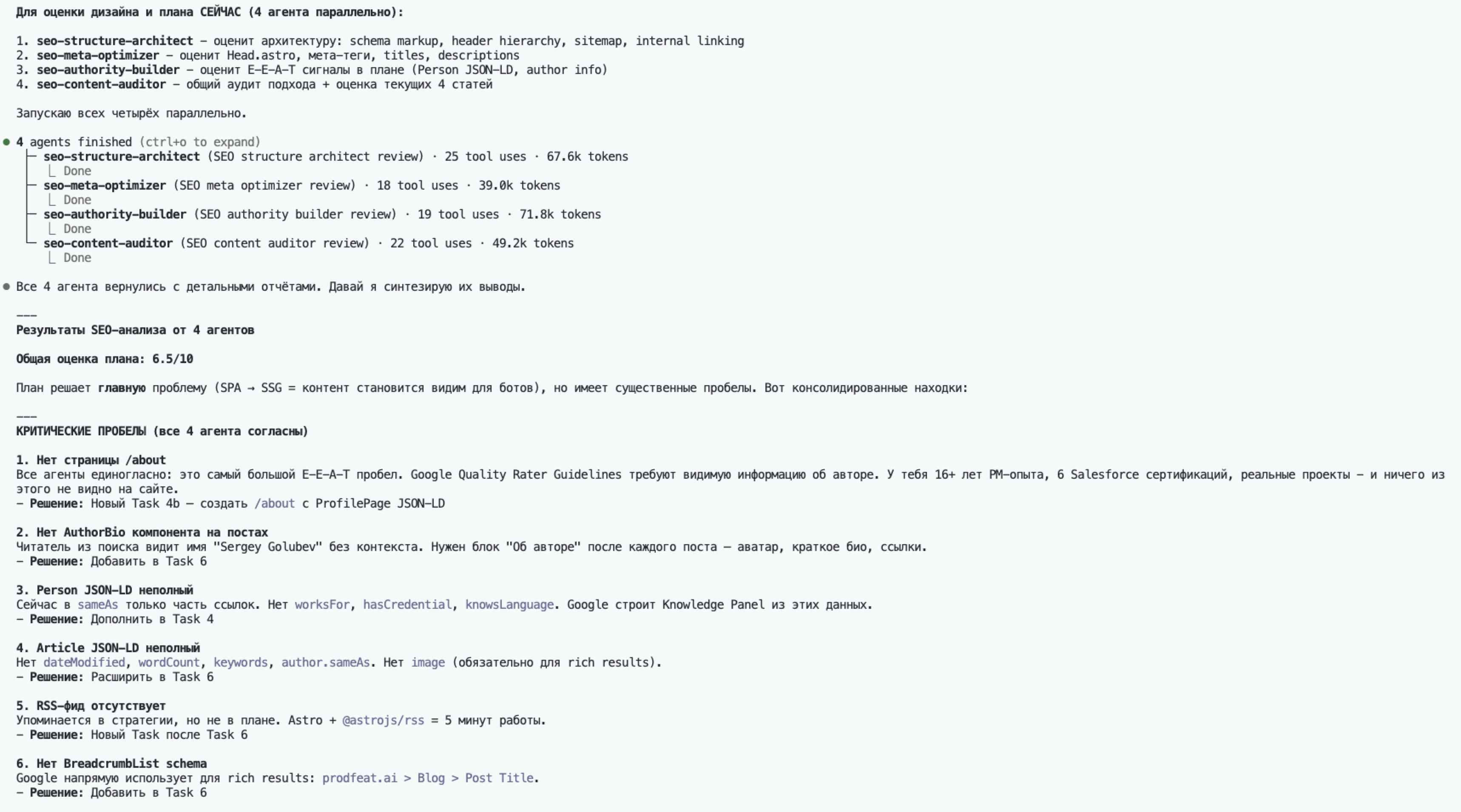

Asked Claude Code to figure out these “specialists” on its own and assign them to do a full audit of the current site and the existing plan. 4 agents kicked off in parallel. The structure-architect alone consumed 67.6k tokens and made 25 tool calls - tore the site apart piece by piece.

Audit results:

- Technical SEO: 7/10

- Content Structure: 5/10

- E-E-A-T: 4/10

E-E-A-T at 4/10 - no AuthorBio, no JSON-LD, no structured data, no internal linking. I would never have thought to check any of that myself.

What I did

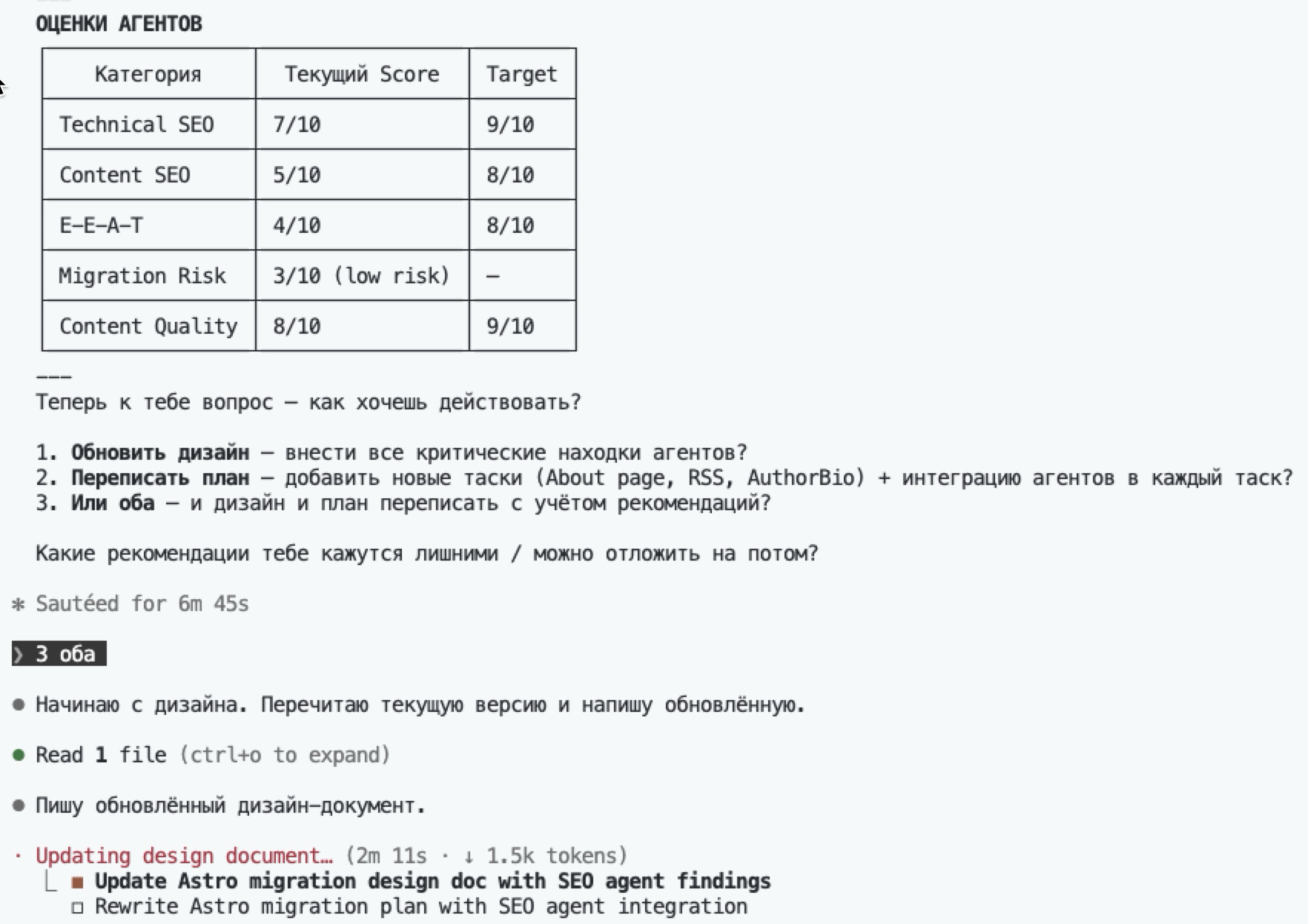

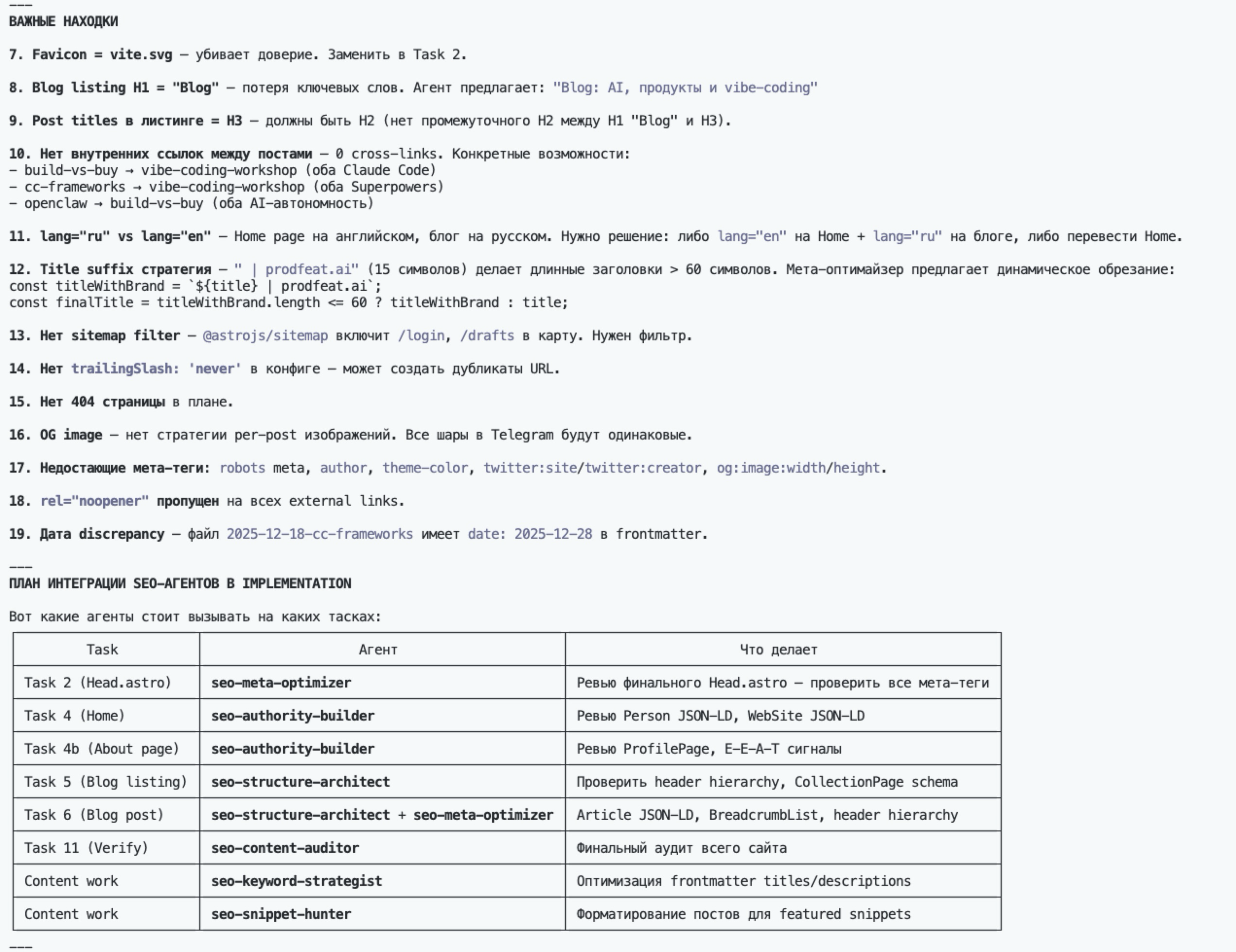

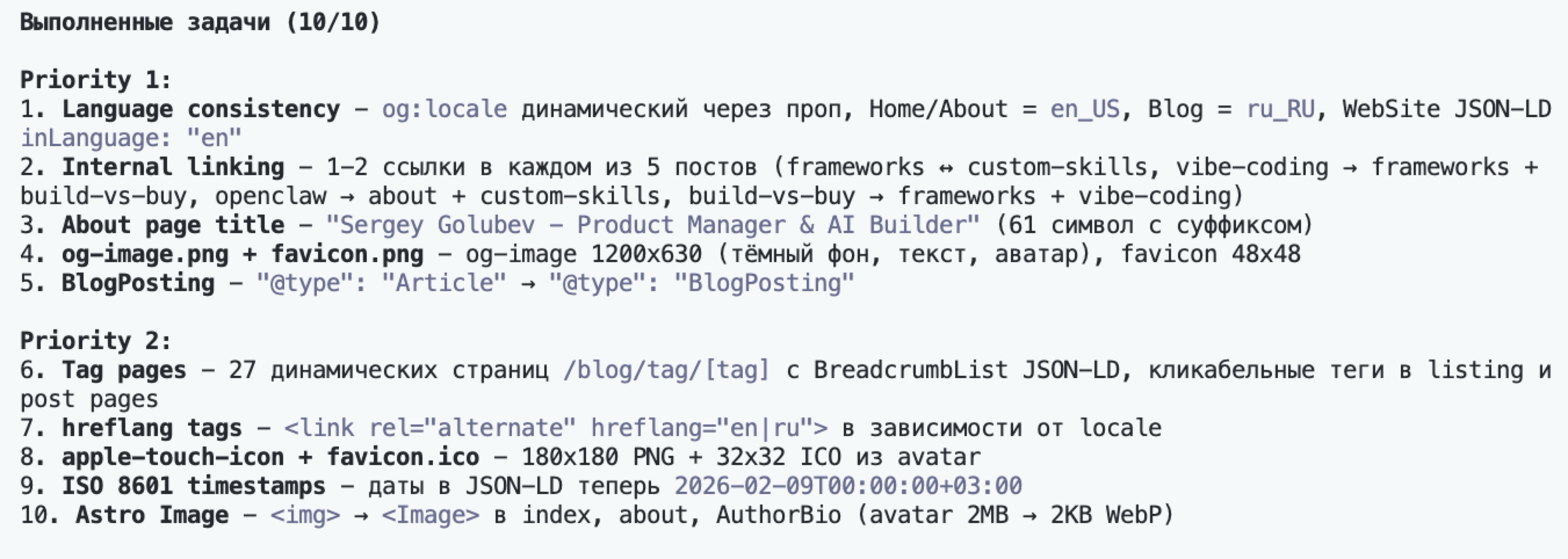

Based on the audit, I updated the design document to v2 - now with specific SEO tasks from the agents.

Key decisions and why:

- Astro 5 with Content Layer API - Markdown blog out of the box, no plugins needed

- React Islands - to avoid rewriting interactive components from scratch

- 5 JSON-LD schemas (Person, WebSite, ProfilePage, Article, BreadcrumbList) - agents caught these, I would’ve missed them

- Language strategy - one language per page,

lang="ru"at root - Admin panel with JWT auth - to manage content without redeploying

The first 2 tasks took an hour and a half out of four. Astro config, routing migration, component adaptation - caught React Islands hydration errors 3 times because Astro handles client:load directives differently. Content Layer API worked on the first try, though.

Result

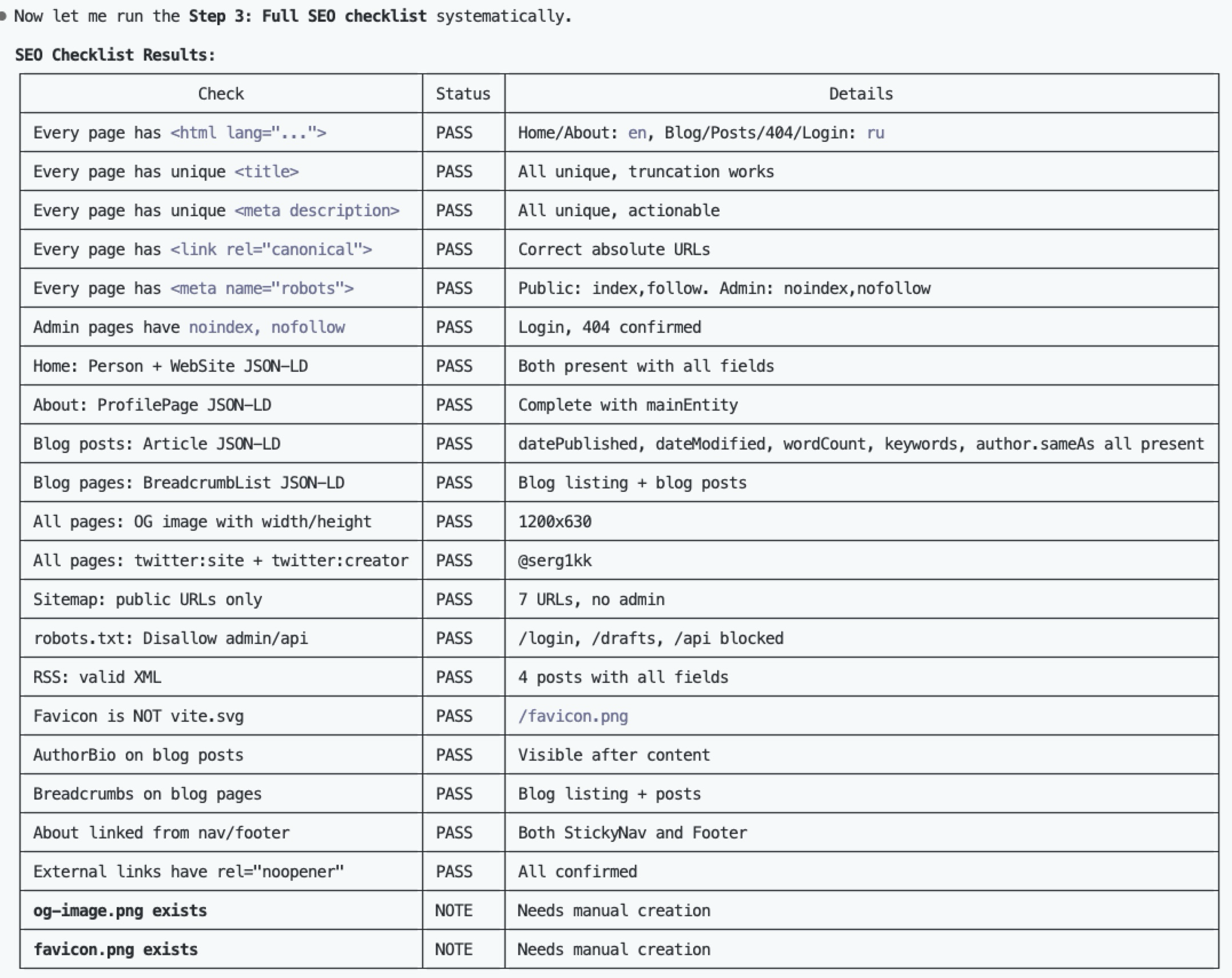

Full SEO checklist after migration - all PASS.

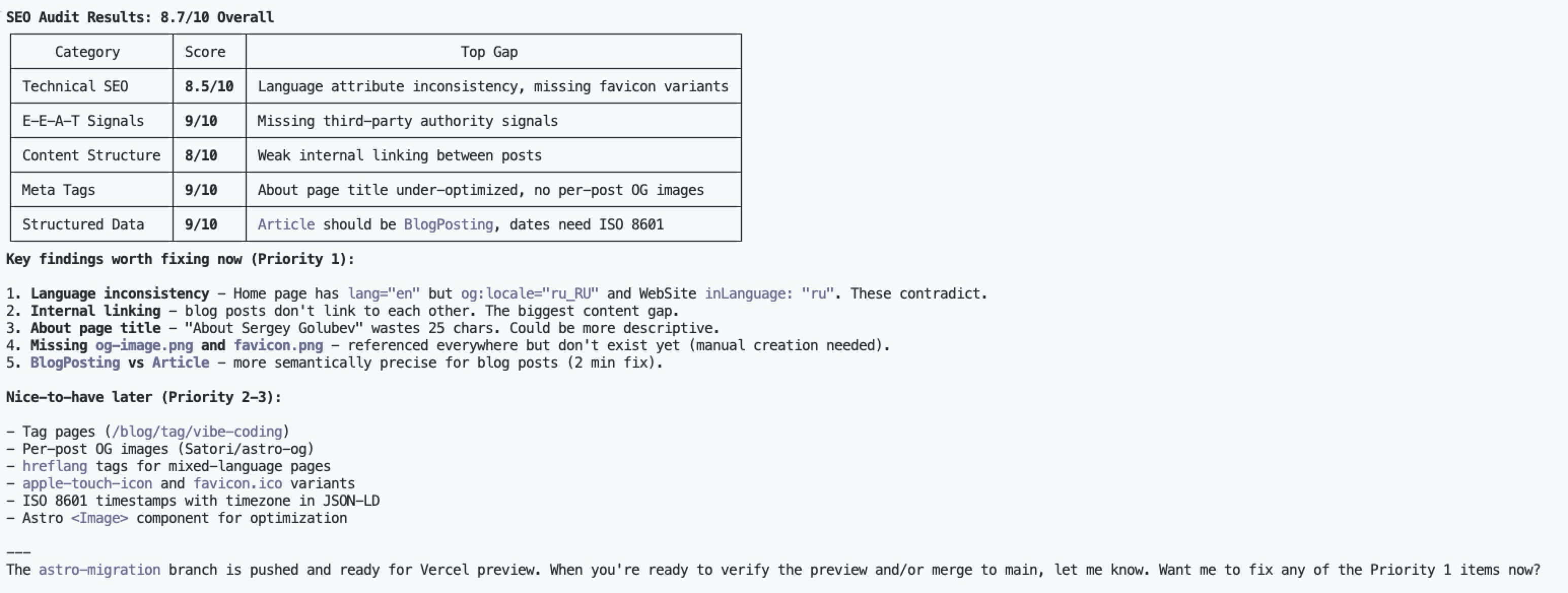

Final audit:

- Overall: 8.7/10 (was 6.5)

- Technical SEO: 8.5/10

- E-E-A-T: 9/10

- Meta Tags: 9/10

- Structured Data: 9/10

- Content Structure: 8/10

34% improvement with Claude Code. No SEO consultant. No manual audit.

What I learned

The GitHub SEO agents were the key move. Found a link, copied the files, ran them - and got expertise I didn’t have and never would have. Without them I would’ve just moved the SPA to Astro and stopped there. JSON-LD, E-E-A-T, structured data - all missed.

Astro is genuinely good for content sites. Content Layer API, island architecture, zero JS by default. React stayed only where interactivity was needed - admin panel and a couple of animations. Astro 6 is already in beta, so the stack will stay relevant.

Not sure yet how this will affect real organic traffic. The scores look good, but Google needs time to reindex. I’ll check in a month and report back.