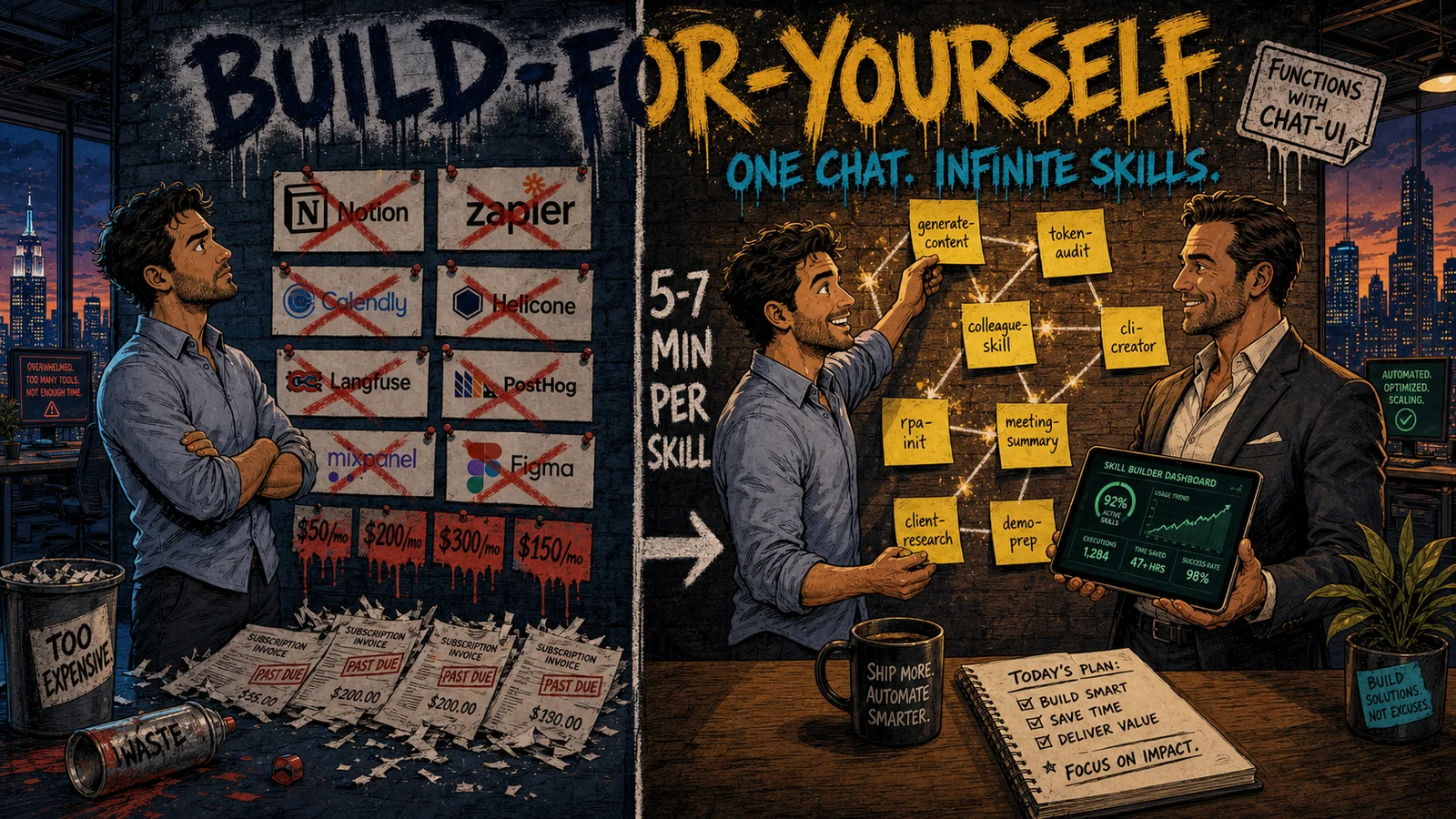

One chat with Claude. Inside it: 70+ of my skills - digests, podcast rendering, processing meetings, reviewing student homework, prepping demos for sales, generating images for posts, and dozens more for work and beyond. About a third of them are polished; the other two thirds are still in progress or won’t survive at all: tried it, didn’t work, deleted. That’s normal dynamics. From the moment Anthropic officially opened Skills as a standard in October 2025 until today (ten months of pure practice), I’ve built up a library that strips out routine, adds things I never had time or skills for before, and sometimes does things no off-the-shelf service exists for in the first place. The SaaS-stack alternative: subscriptions of $200-300/mo for products where 70-80% of the functionality you don’t need, plus the same again on integrations, and many of the personalized scenarios you actually want simply aren’t there.

And this isn’t a developers’ story anymore. Skills became accessible to people in any profession: a marketer, a salesperson, a lawyer, an accountant. In effect this is a new form of application: a function with a chat UI instead of a separate screen, assembled through a short free-form dialogue with an agent.

What happened in six months

The market consolidated into one form very fast, and the timeline helps. On October 16, 2025 Anthropic released Agent Skills as a public standard; on December 18 of the same year the standard went open (agentskills.io, the anthropics/skills repo on GitHub), and as of May 2026 the repo has 131K+ stars. On January 20, 2026 Vercel launched skills.sh: a catalog plus an npx CLI for installation. At Skills Night in San Francisco the numbers were announced: 69,000+ skills, 2,000,000 installs.

A single format is supported by 18+ platforms: Claude Code, Cursor, Codex, GitHub Copilot, Gemini CLI, Manus, OpenCode, Goose, Roo, Kiro, Amp, Windsurf, Trae, and others. Since February 2026 every skill goes through an automatic security audit by Gen, Socket, and Snyk before landing on the leaderboard. Manus adopted the SKILL.md standard in January and launched a Team Skill Library on top of it; by spring 2026 Manus became part of Meta (or hasn’t yet?). OpenAI rolled out Codex CLI with a built-in skill-creator and skill-installer: a configured AI agent for a non-technical person in 15-20 minutes.

This isn’t an “early stack” anymore, it’s a standard the market accepted in half a year.

Skill = application 2.0

Any application or microservice: a function with an interface. A skill: the same function, where the chat with the agent is the UI. The same chat is used both to create the skill and to run it afterward.

This changes the ROI on “your own tool”: from weeks for a full-blown service (frontend, backend, deploy, support) down to 5-7 minutes of conversation with an agent. You explain by voice or text what should happen, what the inputs are, what the outputs are; you get a ready SKILL.md that’s invoked via /skill-name or activates itself based on its description.

Compare the economics. The average SaaS subscription costs $20-50/mo, and a typical solo stack, based on conversations in the OPC community, adds up to 8-12 tools at $150-300/mo. Zapier on heavy load runs $50-300/mo and is easily replaced by n8n at $5/mo or a skill at $0. Your own skill: 5-7 minutes of development plus token cost to run (cents). Behind every subscription stands someone else’s logic; your own skill is your logic, your data, your adaptation to your workflow. Found a token leak in a CC log: added a line to the token-audit skill, ran it again. You don’t open Helicone, you don’t pay for a seat.

In the background runs a broader trend the community calls the “dark matter” of AI software: the rise of “for-myself” apps that will never become products. They don’t show up in analyst charts, they don’t sell ads, but they solve the concrete tasks of one concrete person. Skills are the format this dark matter lives in. Many practitioners already have 10-25 personal skills, and they will never package them as a commercial product. They don’t need to.

Two layers of skills: infrastructure and business

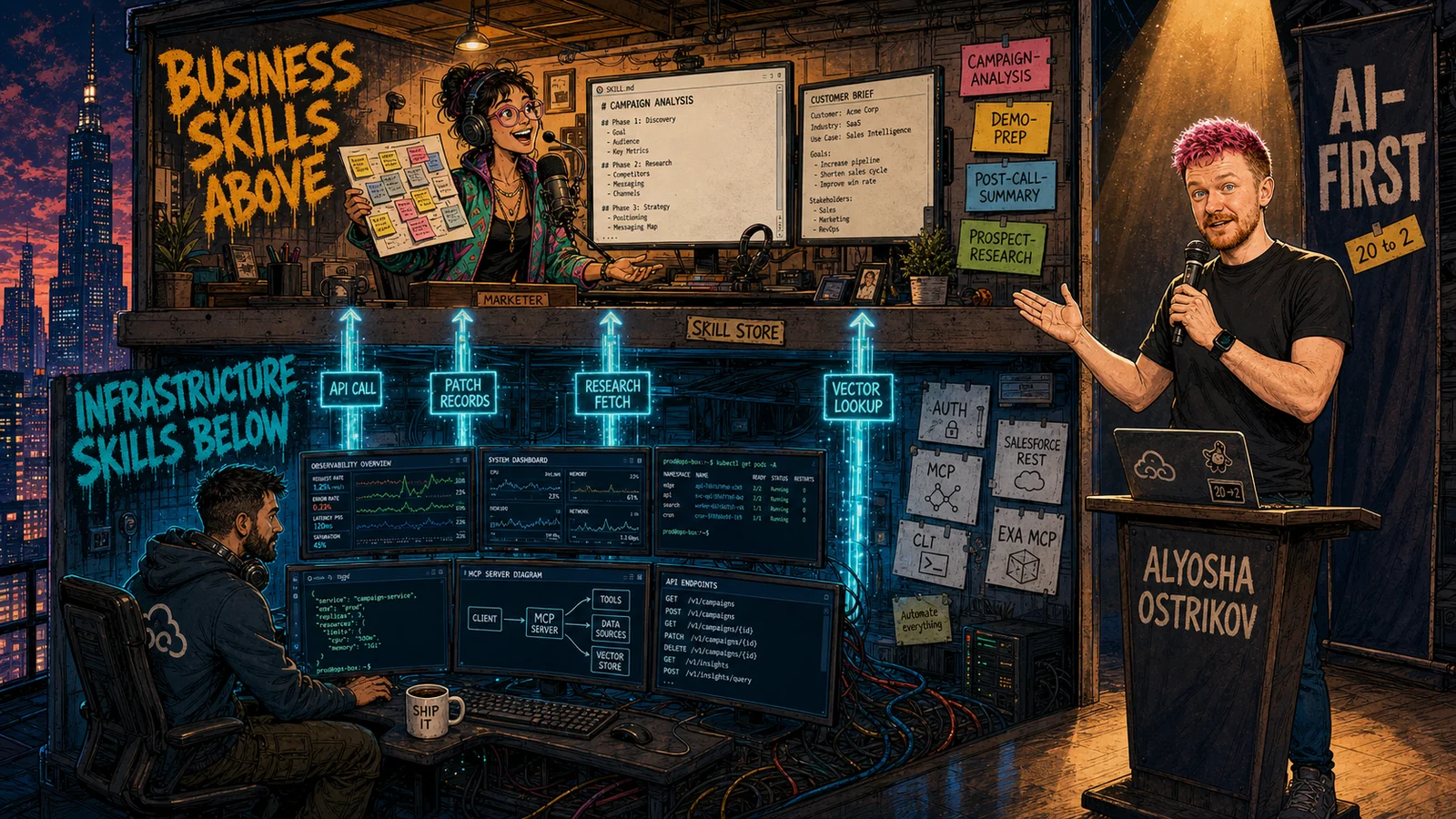

In a recent stream by Alexey Ostrikov (Head of Software Development at Yandex) about the AI-First company, there’s a sticky idea: skills inside an organization split into two layers.

The picture of an AI-First company, the way Alexey frames it, is almost embarrassingly simple. Before: a person takes a step, hands documents to another person, who hands them to the next. In the future: an agent takes a step, hands off to another agent, who hands off to the next. People improve agents and watch the result. At the top of the pyramid sits one CEO in a chair, watching agents run the business. The “one CEO + a million agents” version is impossible right now for three reasons: a human doesn’t have enough attention, doesn’t have the expertise to evaluate every agent’s output, and technical debt piles up. So the realistic target for the next couple of years: one strong team writes a shared agent core (an agent harness), and dozens of teams on top of it write skills for their business processes.

A nice contrast: 2024 vs 2025 at Yandex, in Alexey’s words. In 2024 a guy named Igoryan spent 3 months polishing one process: pulling tickets with attached documents, parsing, dropping into a spreadsheet. One process, three months, low-level Python. Tens of thousands of such processes across the company. By 2025 the architecture decoupled: one team invests in a shared harness, and dozens of teams (not necessarily programmers) implement business logic at the skill layer on top of it. In parallel, not in sequence. That’s the phase transition.

Layer 1: infrastructure skills. Written by developers. They reach into the company’s internal systems: tickets, wiki, documents, ClickHouse, organizational data, traces, authorization. They know how to talk to each system, which auth methods, which kinds of scripts (CLI, curl) get embedded into a SKILL.md. This is a separate caste of people, and the work is rough: maintaining different auth methods and different kinds of scripts inside md files is, as Ostrikov puts it, “a separate kind of pain”. Without this layer, business skills don’t exist.

Layer 2: business skills. Written by non-technical employees by voice: a marketing manager, sales, lawyer, accountant. You take a person, sit next to them, hand them an agent with the infrastructure skills already wired in. Then for 20 minutes they dictate their process: how they pick a segment, how they prepare an offer. At the end, the prompt: “Now create a skill based on this conversation”. Done: a segment-selection skill, or an offer-generation skill, or a document-assembly skill on top of existing infrastructure. The first run works badly. A few weeks of polishing next to a person who actually understands the business process, and they transfer part of their own experience into the skill. You end up with a mini junior analyst that this person “hired” for themselves. After that you teach them to vibe-code the rest of the skills for their own tasks, and they go off into independent flight.

And for all of this you need separate infrastructure: an internal Skill Store inside the company. A place where you can write a skill by voice, ship the artifact, share it with the team, find others’. With access to infrastructure skills out of the box. Essentially an analog of the App Store, only inside one company. Ostrikov’s quote on this is blunt: “Without this key element, nothing is possible”. If a company doesn’t have such infrastructure, business skills either don’t get written at all, or they get written in someone’s local folder and die when the person leaves.

The architectural piece that resonated for me personally: local agent → cloud agent. First you work the skill out locally, on a laptop, with fast iterations. When it’s tuned to where it “works 19 out of 20 times”, you push it to the cloud and put it on a cron or a trigger. While you’re running it locally, the laptop is open, the skill is available only to you. The cloud agent runs 24/7, others can use it. Caveat: at the second stage you need internal infrastructure to host these digital “employees” somewhere. Small teams may find it easier not to build it, but they can also get away with a Mac mini under the desk or a cheap VPS.

A concrete case from Ostrikov’s team: one manager from the payments team has been trained to vibe-code skills. His skills summarize meetings and immediately compile follow-up documents. He cut himself 2-3 hours a day. From there he started selling skills to other Yandex managers: he visits colleagues, shows them, helps stand it up on their side. Viral spread without top-down pressure and without a CTO mandate. By the way, that’s one of the strongest pieces of evidence that the form itself works: the effect on the user is tangible enough that he himself starts spreading it.

Alexey separately captures the rule “20 people in a workshop → 2 light up”. You take a group of 20 non-technical employees, run a workshop with all the circles of hell: install, setup, first skill. Out of 20, 18 don’t have their eyes light up. Two do, and they want to keep going on their own. That’s normal and predictable. The organizer’s task: spot those 2, don’t let them go, and replicate practice through the company through them. They’ll become local champions; it’s not CTOs on podcasts who roll skills out into real teams, it’s those two out of twenty. Curious whether the ratio works the same way in Russian-speaking teams. My gut says it depends on the makeup: in some places it’ll be 1 out of 20, in others 4, and not every 2 will actually become champions in the long-distance sense. There’s no real data; for now I act on the rule “look for the eyes that light up, don’t try to convince the rest”.

And one more pattern from the same stream: digital personalities, not anonymous agents. Better to create “Neuro-Maryvanna from treasury” and “Neuro-Vasya from the PM department” with a name and a set of 7-8 skills under the hood than a faceless “Assistant #3”. Adoption is higher: people subconsciously talk to a person, not a tool. Sounds infantile, works better than “AI assistant 4.0”.

Ostrikov frames AI-fluency as a two-month skill, not a university degree. Experience in profession (7-8 years, senior level) × expertise × AI-fluency = a new leadership role. And anyone whose “eyes have lit up” only needs 2 months to learn to talk to an agent, pick a model, manage context, call into skills. Not Harvard. And it’s exactly these people, by his forecast, who in a year will be carrying the transformation of their companies.

The main risk of the whole construct: “error multiplication” if there are no EVALs between the agent steps. If at every node there’s a human reviewer, the speedup is +20% and that’s the ceiling. If EVALs are embedded into the skills or split out into a separate validator skill, the speedup becomes multiplicative. Without them, agent-1 produces a crooked output, agent-2 takes it as input, agent-3 confidently builds the next step on it, and what spills into production is, as Ostrikov puts it, a compounding mess. That’s why in the same team they started adding an EVALS section into infrastructure skills with test cases and expected output: the skill checks itself on every run. In my own generate-content (more on it below) there’s a similar pattern: a separate VERIFY stage with 5 critics and written tests at every step. Without it, long pipelines degrade.

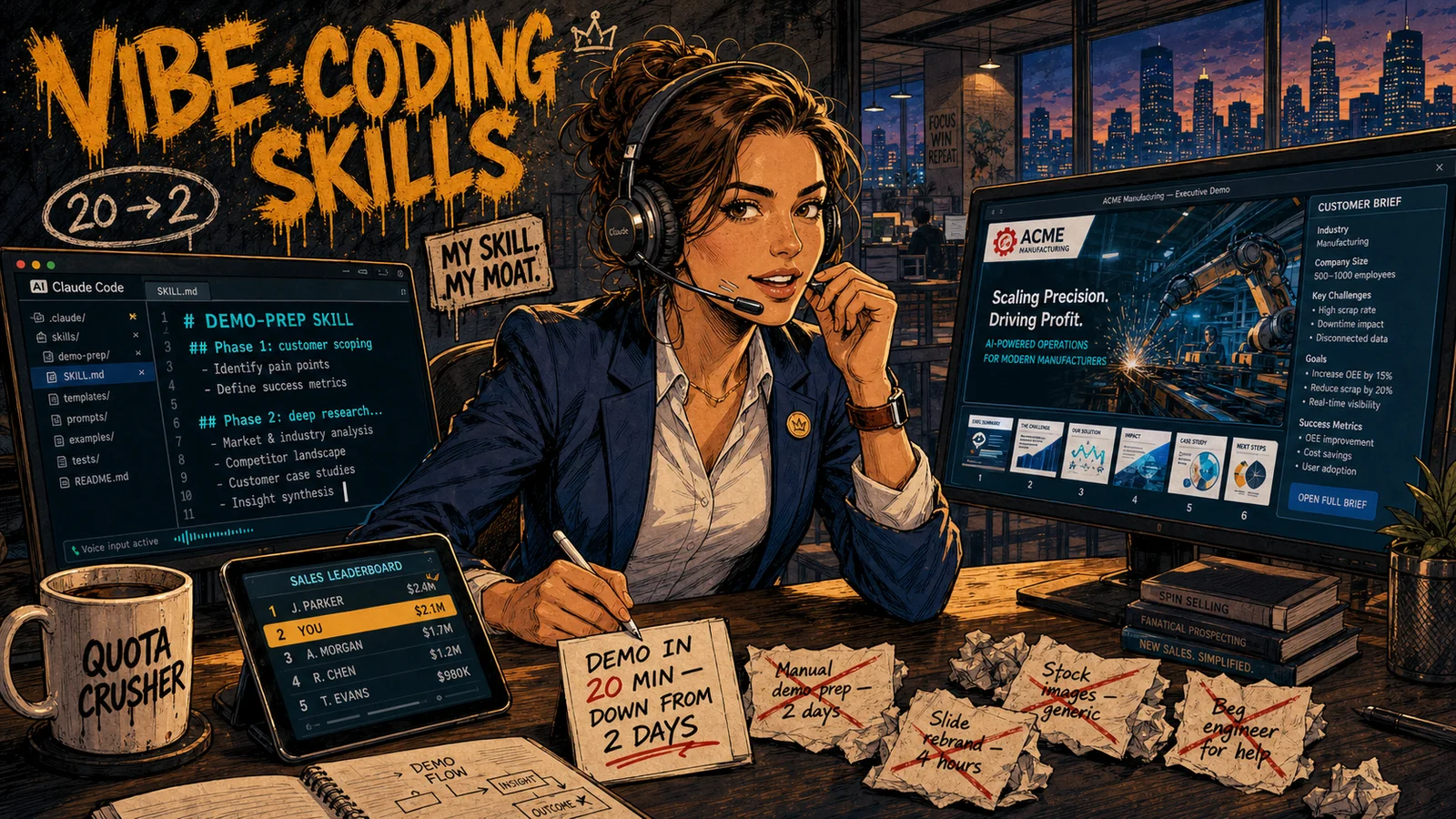

A personal example: a skill for prepping sales-team demos

At my company this is one of the recent cases: it’s the first time I’m trying to hand over a finished but raw skill to people from non-engineering departments. The sales team “volunteered” first, and that’s where I’m running it in. The task is classic: a sales rep prepares a demo for a new client, and for each client the demo environment has to be personalized. Images, copy, industry-specific examples, real terms from their industry. This used to take a day or two of manual work. Some sales reps did this work poorly, some put it off and ran demos with default mock data.

At my company this is one of the recent cases: it’s the first time I’m trying to hand over a finished but raw skill to people from non-engineering departments. The sales team “volunteered” first, and that’s where I’m running it in. The task is classic: a sales rep prepares a demo for a new client, and for each client the demo environment has to be personalized. Images, copy, industry-specific examples, real terms from their industry. This used to take a day or two of manual work. Some sales reps did this work poorly, some put it off and ran demos with default mock data.

What I ended up assembling: a demo-prep skill that takes 15-20 minutes for a specific client. The sales rep launches it in Claude Code and goes through eight phases of dialogue. The skill leads them by the hand, doesn’t let them skip steps, and fails fast at every stage where something is off. Eight phases:

- Phase 0: pre-flight checklist. We check that everything is in place: is the Exa MCP connected (needed for research), are Unsplash and Pexels keys set up (free, one-time 4-minute registration), are session settings in the demo environment correct (IP lock disabled, otherwise auth will fail), is VPN off (otherwise the service’s anti-fraud will freeze the user). If anything is off, the skill prints a walkthrough and doesn’t proceed. This is the kind of necessary rudeness: 30 seconds of checking beats 20 minutes of murky debug in the middle of Phase 5.

- Phase 1: customer + audience scoping. Sales answers 4 blocking questions: client company name, industry (short label), who the demo will be shown to (internal employees / product users / partners / custom), sales context (what’s the deal, who’s in the room). All four fields go into the session file. Without them the skill won’t move on: otherwise the output ends up being a “hospital-average” demo that the sales rep is later embarrassed to show.

- Phase 2: deep customer research via Exa MCP. The skill goes out to the web through Exa on its own and assembles a 300-500 word customer brief: what the company does, key terminology, recent initiatives, real names of their certifications or training programs (where applicable). The queries are built to catch facts the prospect will recognize on first sight. The brief comes back to sales for verification: “Anything to fix or add before I start?”. If sales edits it, the skill researches again.

- Phase 3: org connection + safety check. Sales picks a demo environment from saved ones or connects a new one. The skill classifies it: sandbox / dev / trial / prod. On a prod environment the skill blocks by default (an explicit override is required). Before every login a network check runs to rule out an accidental login from a VPN: this mechanic already saved a real account once that would otherwise have been auto-frozen.

- Phase 4: record pull + scope decision. The skill pulls all the needed records from the demo org. Sales decides what we’re rebuilding: everything or only some of the data (for example, only Achievements and Media). This matters: rebuilding “everything” for a major client is fine; rebuilding “everything” for a surface-level demo call is overkill and eats time. Sales controls scope, the skill just does what it’s asked.

- Phase 5: image asset prep. The most loaded phase. The skill prepares a verified image pool: 16:9 and 1:1 candidates for the client’s industry. Sources: first the client’s official site (logos, product screenshots, corp photos), then Unsplash and Pexels stock by semantic queries. The pool is assembled BEFORE the per-object review so that on the next step sales doesn’t wait 30 seconds each time, but just clicks “ok / next”. This is the difference between “the skill takes 15 minutes” and “the skill takes an hour”.

- Phase 6: object-by-object review. The skill walks sales through one class of objects at a time: here’s Media (10 items), here’s Achievement (5), here’s Learning Plan. For each object, sales says “ok” or “not ok, redo”. If “not ok” - the skill regenerates immediately with the correction. This is the most conversational phase, and also the most valuable: at this moment sales actually owns the personalization, doesn’t hand it off to the LLM.

- Phase 7: push (CSP + composite PATCH). The final step. The skill first auto-registers all image domains in the demo org as CSP Trusted Sites (otherwise images won’t render), then runs a composite PATCH with fail-fast: first error, rollback, backup in hand.

And on top of that Phase 8: post-flight checklist for the sales rep: Experience Cloud CSP check (if the demo touches it), visual spot-check, fallback warnings (“if such-and-such image didn’t load, swap to this one”), a copy-paste-ready rollback script. The “revert to as-was” button has to always exist.

On time: building the skill took me ~4 hours of clean time. After that each demo will cost (by my count) the sales rep 15-20 minutes, vs a day or two of manual work. There’s no off-the-shelf solution for this specific task on the market (I looked). The combination is too specific: the ecosystem where it’s needed, customer research + stock from two APIs + custom PATCHes into the demo org + safety rules of a specific platform. To get this as a service, you’d have to commission custom development: weeks of work and tens of thousands of dollars. And it’s still not clear they’d build it right (more likely no, or they’d build a first version that would be hard to keep improving and supporting).

This is exactly Ostrikov’s two-layer architecture on a small team. I (as the technical person) wrote the infrastructure layer: Salesforce REST, an Exa MCP wrapper for research, image sourcing, prompt templates, CSP registration, fail-fast PATCH. The sales team uses it as a black box and gets their own business skill “prep a demo for client X”. No SaaS purchase, no pulling engineers in for every demo. They just answer the agent’s questions in the terminal and chat with it (a new experience for some people, one that pulls you in - imagine if it does what you need on the first run :D).

Next, the next step with them is planned: teach them to improve the skill on their own, based on their own feedback. The logic of the next step is simple: every demo-prep run ends with a short form “what worked / what didn’t / which phrase in the skill to rewrite”. The sales rep collects three to five such notes, we sit together for half an hour, and I teach them to make edits in SKILL.md or in its reference files. Essentially this is ownership transfer: from there they fix things themselves and only ask me when they hit a wall.

And this scheme works not only for sales: build the first version of the skill with a technical person alongside business, hand them a working thing, explain how to live with it and improve it from there. The barrier for them to go from a blank line to a working skill on their own is high. The barrier to polish a finished skill by another 30% is low. That’s exactly the gap that Ostrikov closes with “programmers help and answer questions”. I’m in that role for my sales team right now, testing whether it works.

Catalogs: skills.sh, anthropics/skills, SkillMD

anthropics/skills on GitHub: the official repository, 131K+ stars. Categories Creative & Design, Development & Technical, Enterprise & Communication, Document Skills (pptx, xlsx, docx, pdf). Installs with a single Claude Code command.

skills.sh by Vercel: 69K+ skills as of Skills Night, 2M+ installs. The CLI installs a skill in seconds via npx skills add owner/repo, you pick the target CLI and scope (project or user), and you’re done. The leaderboard shows what’s popular: large publishers add up to millions of installs. Mintlify auto-generates a skill for every documentation site they host.

SkillMD and ClawHub: alternative marketplaces, adding several thousand more community skills.

The main piece of infrastructure of recent months is the security audit. Since February 2026, every skill on skills.sh goes through a Socket scan on publish: 60K+ skills checked, with support for Python, JS/TS, Go, Java, Ruby, PHP, .NET, Shell, Markdown. Metrics on a test set of 382 malicious + 355 benign skills: precision 94.5%, recall 98.7%. Flagged skills are hidden from the leaderboard. This matters: at first many people installed skills from GitHub links without looking, and there was a case where a skill that looked like innocent markdown pulled in a Python file that opened a remote shell on install.

In parallel there’s MCP and CLI. MCP is about tools-via-protocol (how the agent reaches a service), CLI is about tools-via-command-line (rougher, but easier to write and easier to embed into a skill in one line), and skills are about instructions (how the agent thinks and works on a task). These are three different layers, and they complement each other rather than compete. A good skill often combines all three: a text instruction in SKILL.md plus a couple of CLI commands in bash blocks plus an MCP server when interactive access to an external API is needed.

Concrete cases

A few live examples I’ve seen:

- token-auditor (Bayram Annakov): a skill that audits token spend in Claude Code, a few dozen lines in SKILL.md vs a paid Helicone or Langfuse subscription. Installs with one command, works right away. It catches concrete leaks: Opus where Sonnet would do, a bloated CLAUDE.md attached to every message, a cron every 5 minutes instead of every hour, context bloat with no compact.

- CLI Creator skill in Codex App (OpenAI) or Claude Code: feed it API docs, OpenAPI JSON, an SDK, or even shell history, and you get a CLI+skill pair for your service. Timur Khakhalev rebuilt his read-only API into a CLI+skill in a few hours, by his own account. After that the agent can: “How many users registered today with parameter X?” → pulls in the skill → goes through the CLI → answers.

- rpa-skills (Pavel Zloi): the author shares in his Telegram channel a pack of 4 RPA skills for vibe-coding via BDD: project context warm-up, “layered pie” rules generation, adding a feature via red-green-refactor, fixing a bug via a reproduction test. They stopped pasting long prompts into chat: pulled them out into named commands.

- Product Audit skill: audits a product across 4 categories (strategy, agent rules, data infra, ops) and outputs an interactive HTML report in the browser.

- colleague.skill (titanwings): already 16,868 stars on GitHub, per the ROADMAP 13,000+ in the first two weeks. You feed it a colleague’s chat history from messengers (Feishu, DingTalk, Slack, email) and you get an AI agent with their workflows and a model of their personality. Two layers: workflow + personality. I’ll set the ethical questions aside, but the very fact shows the range.

Another personal example: the pipeline behind this post (generate-content)

A separate note about generate-content, because this case breaks the convenient picture of “a skill in half an evening”. Inside it’s not one skill but a pipeline of 3-4 sequential ones, and that’s exactly what assembled the post you’re reading right now.

Start: collecting and brainstorming ideas. Ideas accumulate daily, automatically: one skill pulls them from Telegram digests, another from YouTube breakdowns, a third from the logs of my work sessions. Today’s wave queued up 14 ideas for processing. That’s not the final list, that’s raw material. Then a separate step: I come to the agent with a topic, and we refine the angle, audience, what to add and what to drop, together. Right in planning/brainstorm mode. Without that dialogue, even the smartest pipeline assembles a “by-average” article, with no living voice.

Next, enrichment through my local notes base (~35K records from Telegram channels, YouTube, articles, my own sessions). One of the sub-agents decomposes the topic into 25-27 targeted queries, queries the vector store in parallel, aggregates and dedupes the results. The output is a 40-50KB dossier on the topic: quotes, pain points, numbers, examples from real practice, my own old notes I wouldn’t have remembered otherwise.

After enrichment comes research via Exa MCP. This isn’t a duplicate, it’s a freshness add-on: numbers, current quotes, recent events that aren’t in the local base yet. Then a draft from Opus, then 5 parallel critics (general, rhythm, brand voice, fact-check, kb-leak guard), then a rewriter from Opus assembles their notes and rewrites. And at the very end: an image generation step that was added to the pipeline literally today. The images for this post came from this step: judge the detail and style yourselves.

Now the main thing about “in half an evening”. The first version of generate-content really was built in half an evening. But even that one already had critics and sub-agents: i.e. the starting point wasn’t minimal. What came after? 3-4 months of iteration. Phase counts changed. A vector notes base appeared as a dependency, and that’s a separate architectural fork: where to store, how to populate, how to clean, what to do with duplicates, how to validate. Stage by stage, VERIFY tests were added (the same pattern from the reality chapter). Today the image block grew in. In parallel I had to think about what to feed enrichment with: where the data comes from (telegram-scraper into Supabase, YouTube transcripts), how it syncs, what to do when a source goes down.

This is already an engineering object, not a one-day skill. And here’s the takeaway that matters for the reader: not every skill is small, and that’s fine. Simple routines: yes, half an evening, and the “talk to the agent → ready SKILL.md” pipeline works exactly as advertised. Systemic, multi-stage ones with dependencies (storage, third-party APIs, verification at every stage): those are engineering objects, and they’ll be polished for months. The main pattern from me: a good first version in half an evening + the willingness to keep polishing. Without the second part, the first version dies in week three, like I describe in the next chapter.

Reality: skills are living code

Thought I’d written a skill and was done. Two weeks later it didn’t work anymore: the input format had drifted, the model was interpreting the steps differently, there were no tests inside. I had to do a refactoring sprint. Concrete stories from my own experience.

In one skill I processed posts in batches (chunks), and for every rejected post the model returned a full JSON object: the post text, metadata, the rejection reason, the whole pile. One chunk came out to ~35,000 characters of overhead the model was carrying back and forth in every next request. I changed the format to an array of rejected post IDs: just numeric identifiers, no text or metadata. It became ~350 characters per chunk, 100x less. On a long pipeline this translated into tens of dollars saved over a couple of runs and into speed: the model stopped choking on its own output.

Another skill was hitting the Claude Code output token limit (32K), and the fix wasn’t compressing the data, it was changing the format: generate Python code once, which itself writes the data, instead of having the agent dump JSON outward. 79KB of JSON is 40K tokens; before the agent wrote it, now a script does it in 0 seconds and 0 cents. It took 5 iterations and slamming into the token-limit wall before I figured out where the fix was.

Third story is about splitting Opus and Sonnet. One Opus agent did everything: read configs, updated JSON, did analysis. Opus costs 5x more than Sonnet in credits. Split it: Sonnet for the “plumbing” (reading configs, writing files), Opus for the “brain” (analysis).

And the main thing: a skill should check itself, and here it pays to separate two things people often conflate. Tests are deterministic checks against concrete values: input data → expected output, no LLM involved, the check takes a second. EVALs are checks on the quality of the LLM output: did the model classify correctly, did it hit the format, is it hallucinating. Tests can (and should) be written for everything: for code, for raw data, for text prompts before they go to the model, for the shape of the response after. In my generate-content skill the VERIFY stage is a Python script with 13 tests that runs before the data goes to the LLM or to external services: 0 LLM tokens for verification, and if a test fails, the agent sees exactly what broke and fixes it without me. Each such test saves the cycle “passed crooked data → model generated trash → I noticed → I redid it” with its tokens and time. I’ve seen this myself: in the generate-digest skill without a VERIFY stage, the agent wrote the same post three runs in a row before I noticed the output didn’t have the right IDs. Without tests and EVALs, on long pipelines you’ll get “error multiplication”, as Ostrikov puts it, and a compounding mess pours from the agent into production. Minimum hygiene: ask your coding agent to write tests for every stage of the skill, so it runs them itself before handing you the result.

Summary: skills aren’t “write and forget”, they’re code that needs refactoring, tests, EVALs, and versioning. Taking skills seriously in a company isn’t writing one once a quarter, it’s setting up tests on raw data before the LLM, EVALs on output quality after the LLM, tracking tokens, rewriting built-in skills with your own.

There’s a sober wariness in the community: “It’s a huge illusion that you can write production-grade skills in two weeks. If you could, everyone would have already done it.” Production skills need months of iteration on real data. That’s why the local-agent → cron-in-cloud architecture works: on the local agent you debug to where it “works 19 out of 20 times”, then you push to production with monitoring.

And separately on durability over time. That same 30% of my skills that runs on full autopilot still periodically breaks when new LLM versions ship. The model’s behavior changes, it reads SKILL.md instructions differently, handles tools differently, formats JSON differently: and the stage that ran clean yesterday suddenly starts misbehaving. The fix is fast (an hour or two of edits in SKILL.md plus a couple of new tests in VERIFY), but it does require keeping an eye on model releases. That’s part of the cost of ownership, and in the SaaS stack you don’t have it (the vendor pays for it).

Who needs this: not just developers

A marketing manager, a lawyer, an accountant, a salesperson aren’t developers. And it’s exactly for them that the new form works strongest, because it cuts the dependency on engineering teams.

The marketer assembles briefs, generates offer variants per segment, summarizes feedback and meetings, picks segments. A personal library instead of HubSpot Workflows plus N services on top.

Sales runs the demo-prep skill for a client (my example above). One practitioner showed his cold outreach pipeline: Apollo API → lead scrape → site analysis → personalized emails at ~$0.01 per letter, 10,000 letters in a week.

The lawyer loads a standard contract, the skill goes through a checklist and gives back annotations. A separate skill prepares correspondence with the counterparty using company templates.

The accountant reconciles invoices, generates standard documents using the client’s details, validates the correctness of accounting entries with one command.

HR reviews resumes against the role profile, prepares an offer based on position and grade, assembles an onboarding plan for a new hire.

The PM runs a product-audit, gathers analytics on a feature, prepares a PRD from a template.

The designer generates illustrations for a post via a style preset, reviews a landing page with a package of notes, assembles a moodboard from a brief.

And here’s what matters: neither the accountant nor the lawyer nor the marketer nor the PM would have written an integrated internal service themselves. They don’t have to: they write SKILL.md by voice or text, and the infrastructure layer (written by the technical team once) does the rest. That’s the point of two-layer architecture.

Alexey says it directly: vibe-coding skills is a non-technical people’s skill, not a programmers’. “If you make programmers do it, you won’t have the resources to scale. Programmers should help and answer questions.” AI-fluency is a two-month question, not a university degree. Experience × expertise in a profession × AI-fluency = a new leadership role.

What to do next week

One routine a week, one new skill. Where to start:

- Install Claude Code (or Cursor / OpenCode / Codex CLI: all of them read the same SKILL.md). An hour for onboarding.

- Pick one routine that repeats 3+ times a week. Putting together a report, sorting email, formatting documents, link checking, summarizing a meeting. Ostrikov puts it this way: “A candidate for a skill is anything that repeats and doesn’t require real thinking. If you don’t need to think, why spend hands on it?”

- Describe the process by voice or text to the agent. 15-20 minutes. Then the prompt: “Create a skill based on this conversation”.

- Run the skill 3-5 times, fix the obvious bugs. Ask the coding agent to write tests for every stage: checks on raw data before the LLM (input shape, presence of required fields), checks on output format after the LLM (those are EVALs already). Tests get written not only for code, but also for text and raw data. The goal: catch the error before the tokens get burned.

- Install a couple of ready-made skills from skills.sh: token-audit, product-audit, something from anthropics/skills. Not for the sake of using them, but to look at how others structure steps, what they offload to references, how they write a VERIFY stage.

- Optionally publish your skill on GitHub and on skills.sh: not for fame, for distribution within the team. With the security audit, it’s safer now.

- Cancel one SaaS subscription that your first skill replaces. That’s the clean ROI of the first week.

After that comes the cycle: one routine a week → one new skill. After a quarter you have 12 skills and the subscription stack has shrunk by $100-150/mo. After a year that’s 50 skills, each saving 10-15 minutes a day. Ostrikov on his team gives the headline number: 2-3 hours a day for a manager who vibe-codes actively.

And in parallel a shift is happening for companies: the 10-person teams where 7 people on $200/mo subscriptions vibe-code their own skills on top of shared infrastructure are out-shipping companies of 1,000 people with no internal Skill Store. Simply because the big ones don’t have the infrastructure, and the two-layer architecture doesn’t work without it. Small teams gain an advantage, and it’s not temporary.

Skills as applications 2.0 are a new format of user interface for every profession: a conversation with an agent instead of a click in someone else’s UI. A library of your own 25 skills isn’t “I’m a nerd”, it’s your personal app store, where you yourself decide which functions you actually need.

P.S. and, by the way, you can ask the agent to visualize the data in plain HTML, and see the result in a UI you’re more used to. I’m testing this approach right now with yet another new skill for AI video generation (a replacement for weavy.ai subscriptions); I’ll write it up once I’m done testing.

Sources

- Introducing Agent Skills (Anthropic, Oct 16 2025 + Dec 18 2025 update)

- anthropics/skills: official repository, 131K+ stars

- Vercel: Introducing skills, the open agent skills ecosystem (Jan 20 2026)

- Vercel: Skills Night: 69,000 skills, 2M installs

- Vercel changelog: automated security audits for skills.sh (Feb 17 2026)

- Socket brings supply chain security to skills.sh

- Manus AI Embraces Open Standards (Jan 27 2026)

- OpenAI Codex: Agent Skills

- Алексей Остриков: Как построить AI-First компанию (May 3 2026)

- openclaw.rocks: AI Skills Are the New Apps

- Anthropic, AI Engineer talk: Don’t Build Agents, Build Skills Instead (Dec 2025)

- Ry Walker: Agentic Skills Frameworks Compared