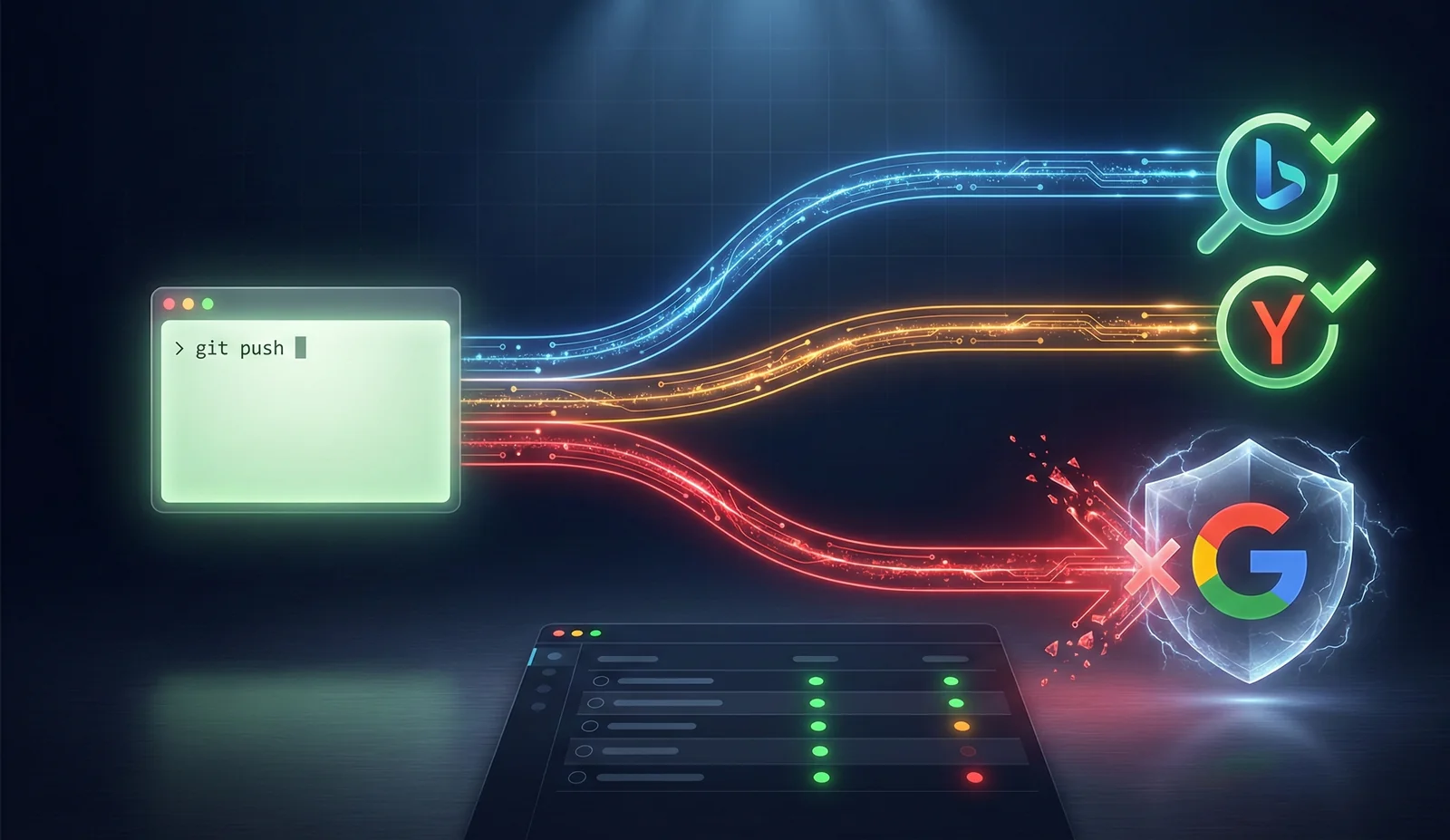

Push to main - 2 minutes later Bing and Yandex already know about the new page. Google doesn’t. Google is harder.

Three weeks ago I was publishing 2-3 articles a week and manually going into Google Search Console, Yandex Webmaster, Bing Webmaster Tools. Copy the URL, hit “Request indexing”. For 15 pages - tolerable. But the blog kept growing and the routine got annoying.

What I built

A GitHub Actions workflow that triggers on every push to main. All the logic lives in one script - seo-pipeline.mjs, 378 lines.

Step 1: Wait for Vercel

sleep 90 - crude, but it works. Vercel deploys in 30-60 seconds, I give it a buffer. Spent 40 minutes trying to poll the Vercel API for deploy status - turned out to be unnecessary. 90 seconds is plenty.

Step 2: Parse the sitemap

The script fetches sitemap-0.xml, extracts all <loc> entries. Compares them against what’s stored in Upstash Redis. New URLs go into the submit queue.

Step 3: IndexNow

Send new URLs in a single POST request to api.indexnow.org. IndexNow is a protocol from Microsoft Bing and Yandex, launched in October 2021. One submit goes to both Bing and Yandex simultaneously - they share the data.

HTTP 200 or 202 - accepted. Bing usually indexes within hours, sometimes minutes.

Step 4: Yandex Webmaster API

IndexNow works for Yandex, but I added a direct recrawl via their API too. Quota is 20 URLs per day. The script checks the remaining quota, submits new pages for recrawl. Task ID is saved for tracking.

Step 5: Google Search Console API

Dead end. Google doesn’t support IndexNow. At all. As of February 2026 - no support, no plans.

Google has an Indexing API, but it officially works only for JobPosting and BroadcastEvent schema. For regular content - useless.

The Search Console API (urlInspection.index.inspect) is read-only. It checks status: indexed, discovered, crawled. Submit via API - not possible. Only manually through the Search Console UI.

Spent 2 hours trying to work around it. Tried the Indexing API with regular content - Google accepts the request and silently ignores it. Dozens of forum threads confirm the same thing.

The script checks the status of all URLs via the GSC API and saves verdicts. You can see which pages Google has indexed and which are stuck on “Discovered - currently not indexed”. Not automated submission, but at least monitoring.

Step 6: Sync to Upstash

All results go into Upstash Redis via the site’s API endpoint. Serverless, per-request pricing, no idle cost. At my volumes - free tier.

Dashboard /seo

The SeoTracker React component pulls data from Upstash and renders a table: each URL, status across Google/Yandex/Bing, date of last check. Pipeline action log. You can see that Bing indexed within an hour while Google has been “discovered but not indexed” for three days.

Result

Every push to main triggers a full cycle. Yandex and Bing get notified automatically. Google - only status check (submit is still manual).

Out of 15 pages: Bing - all 15 indexed within a day of setup. Yandex - 13 out of 15 within two days. Google - 9 out of 15 after manual submit, the rest are queued.

What I learned

IndexNow solves the indexing problem for all search engines except Google. One protocol. Building a GitHub Action for it takes an evening - around three hours with testing.

Google has no API for submitting regular content. Indexing API is for structured data only. The only path is Search Console manually + waiting. Haven’t found a way to automate this yet. If you have - let me know.

Chose Upstash over SQLite/JSON files because serverless API from GitHub Actions is just one fetch, no drivers needed. Also looked at Turso and PlanetScale, but for 15 keys Redis is simpler. Trade-off: vendor lock on Upstash. But at the free tier with 15 keys - not a big deal.