40,335 messages. 11,336 threads. 50 HTML files. One AI-focused Telegram group that I decided to turn into a structured knowledge base. Doing it manually would’ve taken weeks. Claude Code handled it in a single session.

I exported the data from Telegram Desktop. The goal - extract everything useful: recommendations, use cases, opinions, tools, statistics. Sort everything into 12 categories. Get clean JSON as output. 227 MB of raw data.

Swarm Architecture

I wrote a custom skill called telegram-channel-processor for Claude Code. Why a skill instead of just a prompt? I needed reproducibility - the ability to run processing again on other chats without rewriting the logic. The idea - a classic orchestrator-worker pattern.

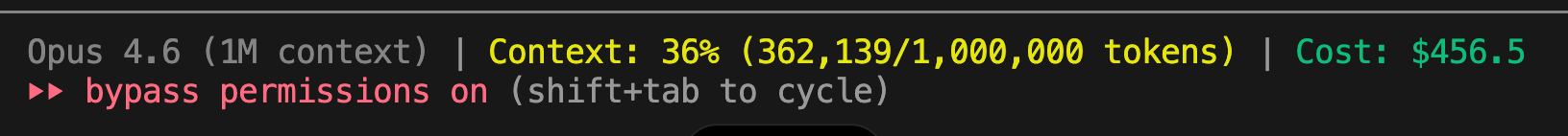

Orchestrator: Opus 4.6 with a 1M token context window. It tracks state across all 18 batches - which chunks are processed, which failed, which need retries. With a 200K context window, this wouldn’t have worked - the orchestrator would’ve lost the thread by batch ten.

Workers: Sonnet 4.6. A deliberate trade-off. Sonnet is cheaper and faster, and for a task like “read a chunk, extract facts, return JSON” - it’s more than enough. Running Opus as workers would blow the budget with no quality gain.

Total: 10 parallel agents per batch, 18 batches, 177 data chunks. 378 sub-agent invocations in total (including setup, duplicate runs, and retries). I was surprised when I tallied them up.

How It Worked

A four-step pipeline:

Parsing. 50 HTML files from Telegram Desktop - extract threads, strip service markup, split into sized chunks.

Filtering. Of 11,336 threads, only 3,218 turned out useful. Twenty-eight percent. I expected 40-50%. Reality was harsher - in any group chat there’s a huge share of greetings, memes, short reactions, and off-topic noise.

Extraction. Each worker received a chunk of threads and an instruction: extract structured entries across 12 categories. Output - JSON with fields: category, text, source, date, tags.

Aggregation. The orchestrator collected results, deduplicated, merged into final files by category.

Four chunks had to be restarted - unexpectedly nerve-wracking because the first two failures hit back-to-back in the same batch. Agents choked on oversized chunks. The orchestrator detected the failures on its own and split the chunks in half. A split-and-retry strategy solved it.

Result

2,764 structured entries across 12 categories:

- Recommendations: 548

- Use cases: 460

- Opinions: 446

- Tools: 336

- Pain points: 301

- Statistics: 256

- Agent engineering: 223

- Trends: 109

- Prompts: 73

- Industry news: 6

- Quotes: 5

- AI business: 1

The session was interrupted twice (pause + continue). Three runs total. The orchestrator with a 1M context picked up the state without any losses.

What I Learned

An agent swarm works at scale. Not as a concept from a blog post - I actually fed it 227 MB and got structured output in a single session instead of weeks of manual work.

A 1M token context window for an orchestrator managing 18 batches and tracking the state of hundreds of chunks - that’s the difference between “it works” and “loses context halfway through.”

Splitting Opus/Sonnet by role saved real money. The orchestrator needs a powerful brain and a large context. Workers need speed and low cost. Running workers alone on Opus - the difference is 5-7x in cost.

28% useful threads out of 40K messages. Even in a high-quality AI community, three-quarters of the content is noise. What to do with 2,764 entries? Still figuring that out. Most likely, a knowledge base for RAG. But that’s the next experiment.