Morning. I fire up Claude Code as usual, and the terminal header already shows Opus 4.7 (1M context) with xhigh effort. Quiet upgrade, no in-app notifications. In parallel, Anthropic reset weekly limits for all subscribers a couple hours earlier (confirmed by folks in various chats). The default effort level in Claude Code was bumped to xhigh across all plans (on the API the default is still high, you have to set xhigh explicitly). The release news and the release itself dropped at the same time.

I ran it through my usual workloads: content, skills, automations, site tweaks, planning new features, development. Here’s everything that matters in one place: what changed under the hood, what actually got better, where the gotchas are, what breaks old prompts, and what to do about migration.

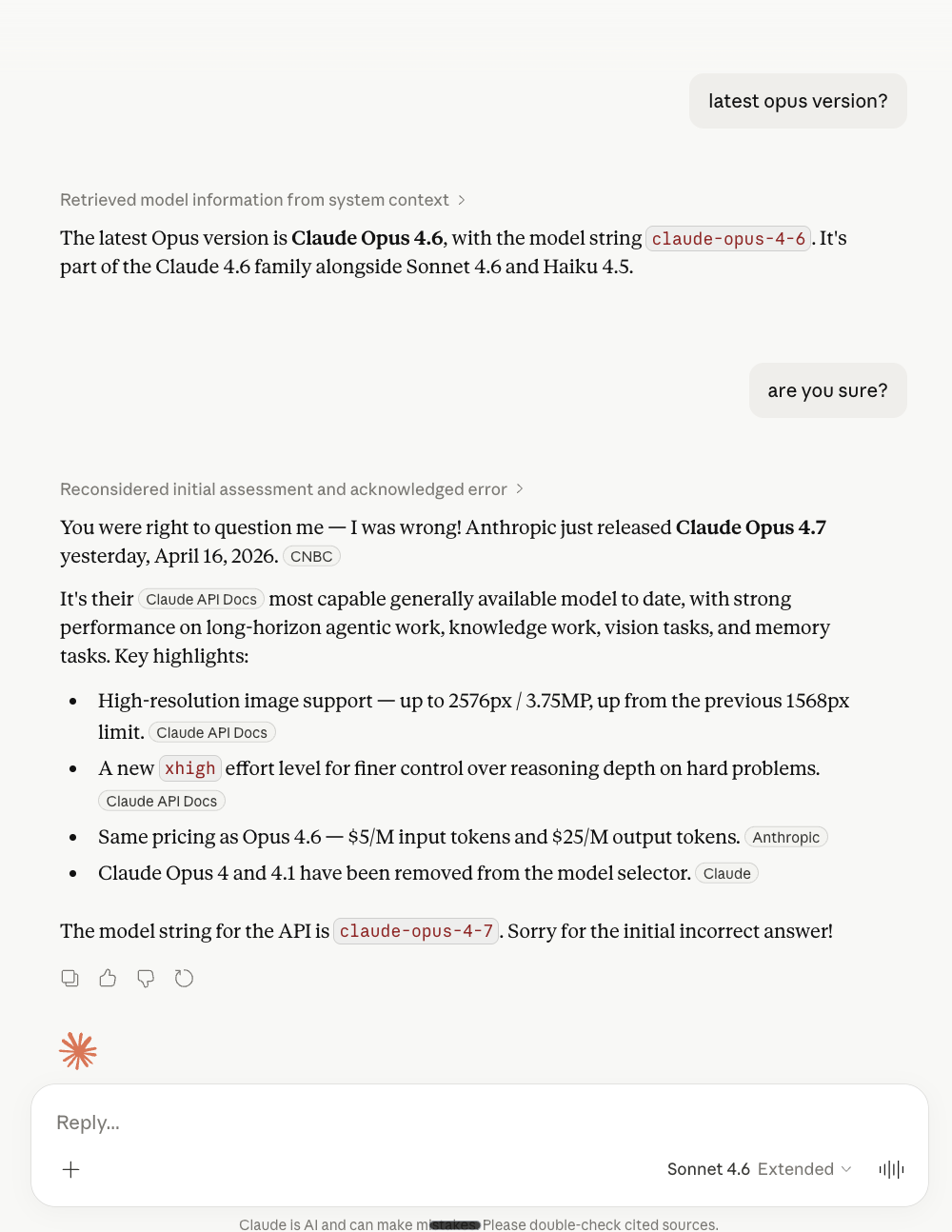

What shipped

Claude Opus 4.7, API model ID claude-opus-4-7. Available on Claude.ai (Pro/Max/Team/Enterprise), in Claude Code, via the Messages API, on Amazon Bedrock, Google Vertex AI, Microsoft Foundry. Same price as 4.6: $5/MTok input, $25/MTok output, up to 90% savings with prompt caching. 1M context window with no long-context premium. 128k max output.

Important context: 4.7 is not the Mythos Preview. That model really is stronger (77.8% on SWE-bench Pro vs 64.3% for 4.7), but it’s locked inside Project Glasswing and available only to verified partners. 4.7 is the first model trained with cyber safeguards: during training Anthropic specifically dampened cyber-capabilities, plus runtime auto-blocking of prompts on prohibited topics is now on.

The release came after weeks of public complaints about 4.6 regression (an AMD senior director and others were posting “Claude can’t be trusted anymore”). Anthropic’s phrasing “previously needed close supervision” is essentially an admission: by April, 4.6 had become worse than it was in January. 4.7 restores trust to a level where you can hand it complex work.

What actually improved

Instructions are followed literally. My CLAUDE.md has hard rules: no auto-commits without confirmation, check status across 4 repos before pushing, specific naming conventions. On 4.6 some of these would slip mid-session and I’d have to repeat them. On 4.7 they hold. Anthropic states it plainly: the model no longer generalizes from one instruction to another or does things it wasn’t asked to. Good for production, a reason to audit old fuzzy prompts.

Planning is more detailed out of the box. 4.6 with plan mode worked well, but for complex features I’d usually do another round of clarifications or run it through a brainstorm step. On 4.7 the plan comes back denser on the first pass: epic, subtasks, architectural forks, CLAUDE.md context picked up automatically. The difference is noticeable on long pipelines where I used to save one round of prompting.

Vision: 2576px vs 1568px. A 3x resolution jump (from 1.15MP to 3.75MP). Critical for computer use and screenshots: dashboards with small fonts, dense UI, data-heavy tables now read without downscaling. XBOW on their computer-use benchmark got 98.5% visual acuity vs 54.5% on 4.6. Bonus: pointing coordinates and bounding boxes are now 1:1 with image pixels, no scale-factor math.

Memory on the filesystem. The model writes better notes into CLAUDE.md / MEMORY.md / per-topic files and pulls them up better on new tasks. Rules slip less after compaction. Anthropic mentioned a Dreaming feature that converts short-term memory into long-term (still in preview).

Knowledge work. Claimed and confirmed: .docx redlining, .pptx editing, programmatic chart analysis via PIL with pixel-level data transcription. On GDPval-AA (a third-party eval of economically valuable knowledge work) 4.7 hit 1753 Elo against GPT-5.4’s 1674. If your prompt had hacks like “double-check slide layout before returning”, Anthropic suggests removing them: the model does this on its own now.

Benchmarks, no inflation:

- SWE-bench Verified: 87.6% (4.6 was 80.8%)

- SWE-bench Pro (contamination-resistant): 64.3% vs 53.4% (+10.9 pp, one of the largest single-release jumps)

- CursorBench: 70% vs 58%

- Rakuten-SWE: 3x more resolved production tasks

- Terminal-Bench 2.0: 69.4% vs 65.4% (still behind GPT-5.4 at 75.1%)

- Notion Agent: +14% with 1/3 the tool errors

- XBOW visual acuity: 98.5% vs 54.5%

- CodeRabbit recall: +10%, precision stable

Even discounting “partners cherry-pick results”, the numbers look honest, especially SWE-bench Pro (it’s scrubbed for memorization).

What concerned me

Slower. 4.7 is noticeably slower than 4.6. The reason is no secret: adaptive thinking is now the only mode, and the model genuinely re-checks intermediate steps. Anthropic says it straight: “thinks more at higher effort levels, particularly on later turns in agentic settings”. For a 5-minute task that’s an extra 40-60 seconds. For a long agentic session, plus 10-20%. The tradeoff: reliability on hard problems. If you pay for speed in interactive UX, this is a minus. If you pay for autonomy on long tasks, it’s a plus.

Token burn is faster now. Several factors stack up:

- New tokenizer: the same text maps to 1.0-1.35x more tokens. Depends on content: code with non-Latin characters is closer to 1.35x, plain English closer to 1.0x.

xhighthinks a lot more thanhigh. In Claude Codexhighis now the default across all plans.- Full-resolution images use up to 3x more tokens (up to 4784 tokens per image vs 1600 before).

Per-token price is unchanged, but the effective workflow cost is up 20-30%. Hex measured a useful side effect: low-effort 4.7 is roughly equivalent to medium-effort 4.6. That’s a compensation path if the task doesn’t need top-tier reasoning.

A word on max effort and limits. Don’t mix up the names: the Max subscription ($100-200/mo) and the max effort level are different things that unfortunately share a word. The problem is with the max effort level. Since March there’s been a massive signal in Claude Code GitHub issues and on Reddit: a single agentic run on max effort can burn your five-hour subscription window in 1-2 prompts. On high and xhigh this usually doesn’t happen - it’s specifically max that produces this effect. Part of the story trails back to background bugs (cache_read, closed in 2.1.91) and peak-hour throttling (5-11 AM PT, since March), but with 4.7 max got sharper: the tokenizer adds up to +35% tokens on the same text, adaptive thinking re-checks intermediate steps, subagents on max multiply the spend. If you’re experimenting with max - check /usage right after each such run, don’t wait until the end of the session.

Day 1 bug in Claude Code. Auto mode in version 2.1.111 broke: the safety classifier was returning claude-opus-4-7 temporarily unavailable on any bash request. It blocked not just writes but reads too. The fix in 2.1.112 shipped by evening. If you updated in the morning, check claude --version.

Cyber safeguards cut some tasks. 4.7 is the first model with real-time blocking on cyber threats. If your product has legitimate pentest, vulnerability research, red team work, you’ll get blocked. For those cases there’s a Cyber Verification Program, but the process isn’t fast.

API breaking changes

If you call the model directly via the Messages API (not through Claude Code or Managed Agents):

-

temperature,top_p,top_know return 400. Strip them from payloads entirely. For predictable behavior, use prompting, not sampling params (they never guaranteed identical outputs anyway). -

Extended thinking budgets are gone.

thinking: {"type": "enabled", "budget_tokens": N}returns 400. Switch to adaptive thinking:thinking: {"type": "adaptive"}+output_config: {"effort": "high"}. Adaptive thinking is OFF by default, you have to enable it explicitly. -

Thinking content isn’t returned by default.

displayis now"omitted"by default. If your UI shows reasoning, setdisplay: "summarized", otherwise you’ll see a long pause before output with no progress indicator. -

Prefill is removed (carried over from 4.6). Pre-filling assistant messages returns 400. Use structured outputs, the system prompt, or

output_config.format. -

New tokenizer.

/v1/messages/count_tokenswill return a different number than 4.6 for the same text. Any client code that estimates tokens from character ratios or cached numbers needs retesting. -

Recommended

max_tokensbump. For xhigh/max effort Anthropic suggests starting at 64k output tokens so the model has headroom for thinking + subagents.

Claude Code has automigration: run /claude-api migrate inside a project and the skill will walk the codebase and apply the model swap, params, effort calibration.

Behavior changes (not breaking, but audit anyway)

This won’t break the API, but may surprise you in prod:

- Response length calibrates to task complexity, not to a fixed verbosity. Simple requests get shorter, complex analysis gets longer. If you depend on a specific output length, spell it out.

- The model makes fewer tool calls by default, relies more on reasoning. To increase tool use, bump effort to

highorxhigh. - The model spawns fewer subagents by default. If you need parallel fan-out, ask for it explicitly.

- Tone is more direct. Less validation-forward phrasing (“great question!”), fewer emoji, more opinionated. If your product depends on 4.6’s warm tone, you need a style prompt.

- Progress updates are built-in for long agentic sessions. If your prompt had hacks like “every 3 tool calls summarize progress”, drop them, the model does this now.

- Stricter effort calibration. On

lowandmediumthe model scopes tightly to the request, doesn’t take “a bit more”. Onlowthere’s a real risk of under-thinking on moderately complex tasks: raise the effort, don’t try to trick it with prompting.

Effort levels: how to choose

This is the single most important thing for migration. Anthropic says it outright: “effort will matter more for this model than for any prior Opus”. Test these recommendations against your workload:

xhigh(new) - best choice for most coding and agentic cases. In Claude Code it’s now the default across all plans (on the API the default stayshigh, you need to setxhighexplicitly). Starting point for most tasks.high- balance of tokens and intelligence. Minimum for intelligence-sensitive use cases. Good for interactive work where response time matters.medium- cost-sensitive, you trade some intelligence for tokens. Simple code, routine fixes, substantive summaries.low- only for short scoped tasks and latency-sensitive workloads. Classification, extraction, format transporters.

Separately on max. This is not “turn it on for everything, more brain is better”. Anthropic and independent benchmarks measured it: on max you get overthinking even on simple tasks, diminishing returns past a certain point, tokens fly into thinking blocks with no benefit to the answer. Plus max is exactly where the “one task burned a five-hour slot” scenario described above tends to land.

When max is actually justified:

- Architectural decisions where the cost of being wrong is high (DB schema, API contracts, concurrency).

- Hard bugs with race conditions that the model misses at lower effort.

- Security review and audit where thoroughness beats time.

- A new unfamiliar area where the model needs to explore a solution space.

- Long autonomous tasks where absence of supervision is compensated by reasoning depth.

When max isn’t needed: feature additions, refactoring clear structure, writing tests, docs, ordinary bugfixes with clear reproduction. Keep the default at xhigh, escalate to max deliberately for the specific kind of work.

Practical tip: start at xhigh, measure quality/cost on your own eval. Don’t try to save with low on hard tasks, you’ll break the reasoning. Don’t try to “upgrade” simple tasks via max, you’ll burn the limit.

New tools that actually help

Task budgets (beta). An advisory token budget across the whole agentic loop: thinking + tool calls + final response. The model sees a running countdown and decides itself when to wrap up. Minimum 20k tokens. This is not a hard cap (unlike max_tokens), it’s a recommendation to the model. Enable it via header task-budgets-2026-03-13.

output_config = {

"effort": "high",

"task_budget": {"type": "tokens", "total": 128000},

}When not to use it: open-ended tasks where quality beats speed. When to use it: long pipelines where you want spend control without hard timeouts.

/ultrareview in Claude Code. A separate session that reads the diff and flags bugs like a senior engineer on PR review. Not a linter, a real design review. Pro and Max get 3 free ultrareviews to try. After three it draws from your normal token budget. Worth trying as a pre-commit gate on complex features.

Auto mode for Max. The model decides itself when to ask for permission and when to act. This is not bypass-permissions (where it just stays silent), it’s a middle mode with judgment. Useful for long agentic tasks: fewer interrupts, but not uncontrolled. Rolled out to Max users today specifically.

Dreaming. Converts short-term memory to long-term. In preview, not many details, but the direction is clear: a polished memory store out of the box.

Compared to competitors (April 2026)

From the last few days of benchmarks:

- Opus 4.7 takes agentic coding (SWE-bench Pro 64.3%), visual acuity on pen-testing (XBOW 98.5%), long-running agentic tasks, MCP-Atlas tool-use first place.

- GPT-5.4 takes terminal-bench (75.1% vs 69.4% for Opus 4.7), OSWorld computer-use (75%, the first to cross the 72.4% human baseline), pure math (MATH/AIME), GDPval knowledge work (83%). Cheaper than Opus: $2.50/$10 per 1M.

- Gemini 3.1 Pro takes multimodal breadth (up to 8.4h audio, 1h video, images, PDF in a single request), reasoning benchmarks (GPQA Diamond 94.3%, ARC-AGI-2 77.1%). 1M context, same as Opus. Cheapest of the three: $2/$12 per 1M.

Most of us didn’t have to choose: 4.7 got auto-rolled on subscription. It’s more useful to understand where 4.7 is actually stronger than alternatives, and where it makes sense to run something else alongside. Coding, agentic pipelines, and visual acuity on dense interfaces - 4.7 territory. Where alternatives stay more advantageous: terminal-heavy workflow runs better on GPT-5.4, native audio and video work is on Gemini 3.1 Pro, and the lowest price per token is also Gemini.

What to do right now

- If you’re on Claude Code Max/Pro: keep working, but check the version (2.1.112+). Auto mode was broken on 2.1.111.

- If you have a large system prompt: audit it. Literal instruction following breaks the places where you had an “unspoken agreement”. Remove hacks for behaviors 4.7 does natively (progress updates, self-checking, concise output).

- If you call the API directly: update your thinking config, strip sampling params, check tokenizer inflation on your own corpus via

count_tokens. - If you’re paying API for heavy workflows: budget +20-30% for the next month. Consider

low-effort 4.7 ≈ medium-effort 4.6as an optimization path.

Sources

- Anthropic: Introducing Claude Opus 4.7

- What’s new in Claude Opus 4.7 (API docs)

- Opus 4.6 → 4.7 migration guide

- Task budgets documentation

- Effort parameter documentation

- Adaptive thinking documentation

- High-resolution image support

- Auto mode bug (Claude Code #49254, fixed in 2.1.112)

- Implicator.ai: coding benchmarks comparison

- Decrypt: first review with token-usage observations

- Cyber Verification Program